Growing Together: Metrics That Spark Peer Momentum

Define Contribution, Not Just Consumption

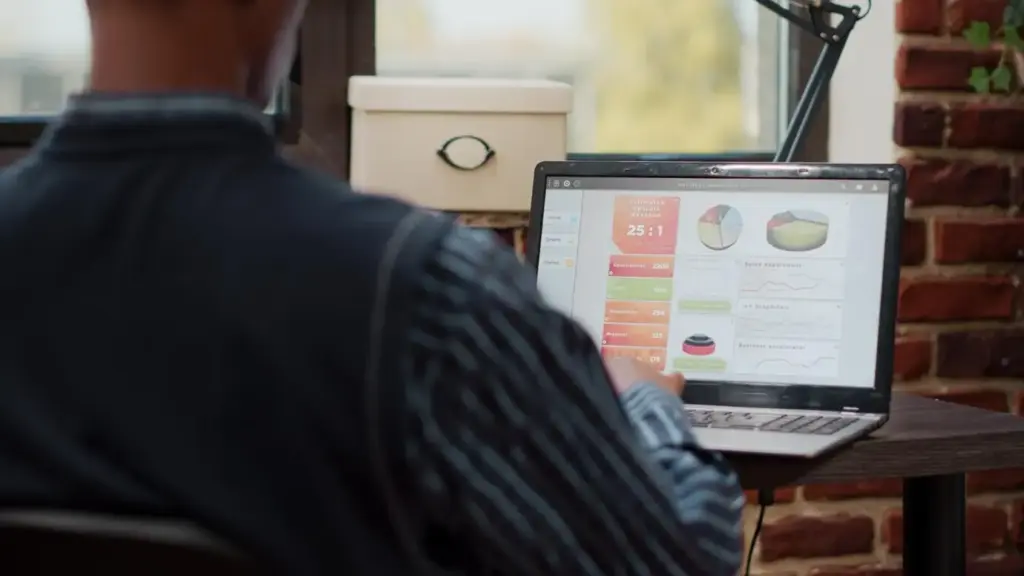

Count what people give, not only what they take. Helpful replies, code reviews, answered questions, shared resources, thoughtful introductions, and mentoring sessions often predict healthier retention and richer outcomes than views or likes. Create precise definitions for what constitutes a meaningful act so contributors are recognized consistently. Ensure these measures encourage generosity without pressuring people to perform. When you highlight contribution, you reinforce the behaviors that multiply value across your network, inviting more peers to participate confidently.

A North Star That Includes Network Health

Choose one guiding measure that reflects both individual success and the health of connections between participants. Instead of focusing solely on revenue or signups, consider a North Star that tracks activated helpers, time to first meaningful support, and responsiveness rates within peer exchanges. Complement it with a ratio of contributors to passive members to reveal resilience. A healthy network sustains growth even when campaigns stop, because relationships and rituals continue to generate momentum. Let your North Star illuminate that compounding dynamic clearly.

From Vanity to Vital Signals

Retire convenient but shallow metrics. Pageviews, impressions, and oversized member counts feel comforting yet rarely guide improvement in peer collaboration. Replace them with activation-to-contribution conversion, invite acceptance rates, median time to first helpful response, and contribution durability over time. Add reciprocity measures that reveal whether help flows widely or concentrates among a few. Vital signals change behavior when visible to teams and contributors alike. They prompt nurturing the right moments, celebrating authentic wins, and building systems that truly scale with integrity.

Instrumentation for Human Interactions

Designing Feedback Loops that Learn

Experimentation Across the Network

A/B Tests That Honor Communities

Randomize at the group or channel level when people influence one another, preventing contamination that blurs results. Predefine minimum help quality thresholds and stop a test if they dip. Keep experiments short to limit disruption. Communicate purpose, expected duration, and safeguards clearly to participants. Afterward, publish findings and restore parity. When people see ethical care embedded in your methods, they remain willing to participate, and you learn faster without eroding trust or undermining the very connections that drive compounding growth.

Cohorts, Causality, and Context

Track cohorts by first contribution date, first invite sent, or first accepted answer received. Compare outcomes across cohorts exposed to different prompts or onboarding paths. Use difference-in-differences or synthetic controls when randomization is not possible. Keep a clear causal story: which mechanism changed behavior, for whom, and in what context. Validate results with qualitative follow-ups that confirm the narrative. When numbers and stories reinforce each other, you can confidently scale improvements without exporting a solution that only fits a narrow slice.

Guardrails and Reversibility

Define guardrail metrics such as helpful response rate, newcomer satisfaction, and contribution durability. If any falls below a threshold, halt and revert quickly. Prefer changes that are easy to roll back and limit the blast radius of risky ideas. Keep emergency playbooks handy, including communication templates that acknowledge mistakes transparently. Reversibility lowers fear, enabling bolder exploration without reckless bets. When people trust you to protect the community during experiments, they continue engaging, providing the feedback necessary to refine better, safer designs.

Stories from the Field

Make It Actionable Today

A One-Week Measurement Makeover

Day one, define a clear North Star that includes contribution. Day two, map the three moments that most influence activation into helpful participation. Day three, instrument those events. Day four, collect qualitative feedback and tag themes. Day five, close the loop with a small improvement. Day six, share results and credits widely. Day seven, reflect, prune vanity metrics, and lock your next experiment. This quick cycle builds confidence while demonstrating how small, respectful changes compound into meaningful, peer-powered progress.

Turning Insights into Rituals

Make insights durable by embedding them in shared habits. Host a weekly fifteen-minute review of contribution signals, then acknowledge one contributor whose action reduced friction for others. Refresh onboarding prompts monthly, using observed sticking points. Rotate ownership so many people learn the playbook. Publish concise updates that explain what changed and who helped. When routines honor both data and humans, the culture remembers what works without heavy process. Rituals transform scattered wins into reliable momentum that welcomes newcomers confidently.

Join the Conversation and Share Your Signals

What is the one metric that best predicts healthy collaboration in your world? Tell us how you discovered it, what you tried, and where it misled you. Post examples of loops you closed and celebrations that mattered. Ask for a second opinion on your instrumentation or experiment design. We will gather your stories, learn together, and refine these practices openly. Subscribe, comment, and invite a colleague who thrives on collective progress. Your insight might ignite someone else’s breakthrough this week.

All Rights Reserved.